|

This post is about two pieces of writing released this week, and how easy it is for even smart people to be wrong. The story starts with an open letter written to the UK Government signed by 200+ scientists, condemning the government’s response to the Coronavirus epidemic; that the response was not forceful enough, and that the government was risking lives by their current course of action. The letter was widely reported and even made it to the BBC frontpage, pretty compelling stuff. Link [1] The issue is that as soon as you start scratching beneath the surface, all is not quite what it seems. Of the 200+ scientists, about 1/3 are PhD students, not an issue in and of itself, but picking out some of the subjects we’ve got:

And so on, just eyeballing the list I'd hazard a guess that a vast majority of the PhD student signatories are non-specialists in this field. Even among the lecturers we’ve got the following specialisms:

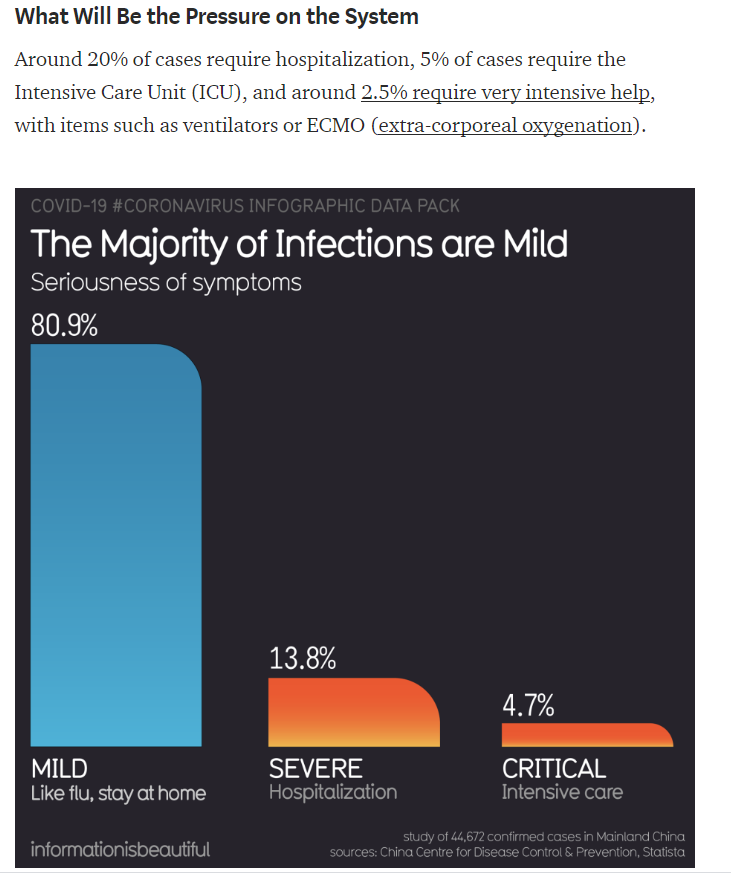

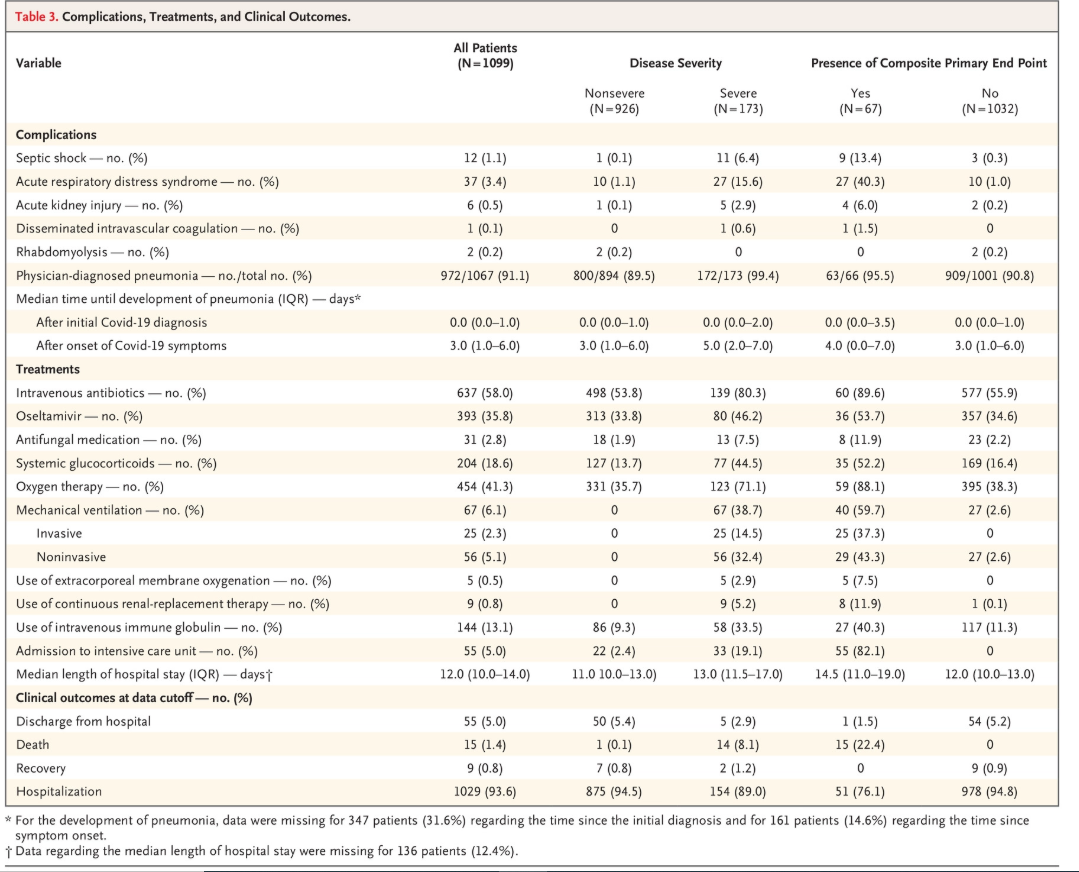

Just because they are not specialists what’s the problem? Shouldn’t they be allowed to have an opinion? I’ve got two responses to this, the first is not a criticism of the signatories – it’s with how this message was relayed in the media. Simply stating that 200+ scientists have written an open letter condemning the Government response is misleading if no information is included as to the relative expertise of the scientists. To call a lecturer in Employment Law a scientists is stretching the definition of scientist beyond any reasonable bounds! The BBC article gave no such indication that the letter had been signed by such a range of individuals. The second issue is with the references provided within the letter to back up the points being made – these sources contain some pretty glaring errors! I'll explain below what these errors are. I think we can and should hold the scientists accountable for not properly scrutinising their sources, to my mind this is exactly the issue with highly educated people with a non-relevant specialisms commenting on an unfamiliar area. So what is my the issue with their sources? Tomas Pueyo’s Medium article The open letter referenced the following Medium .com article written by Tomas Pueyo which appears (ironically?) to have gone viral [2]: Once again, this is an article written by a non-specialist, and is more evidence of how a small amount of knowledge can be a very dangerous thing! I feel like there's a theme here. Before we start digging into the article too much, what is this guy's background? Well for one thing he has written a book on Star Wars "The Star Wars Rings: The Hidden Structure Behind the Star Wars Story - Tomas Pueyo Brochard", not a great start. He's worked at a number of tech start ups, and writes online quite a lot, his Medium articles are predominantly about public speaking, building viral apps, and effective writing. Going viral appears to be something he is very good at, so I'd be inclined to listen to his advice on this subject, the analysis of infectious diseases not so much! The first thing that struck me as strange when reading his article was the following section (pasted below) which gives statistics on the proportion of cases requiring hospitalisation. Note the numbers are worryingly big! Something felt off about this, then I realised - the numbers don’t actually add up to 100%, hmm, that’s a bit strange…. what happens when we start to scratch under the surface? Tomas states that 5% of cases require ICU admission and 2.5% require very intensive treatment i.e. mechanical ventilation or similar. I followed the link that Tomas quoted, and firstly the actual value is 2.3% in the study, not sure why he has rounded up here to 2.5%, that’s quite minor though. The more serious issue is that the study was an analysis of the outcomes of approx. 1,000 patients who were admitted to hospital. The study itself appears to be rigorous, and is presented in a clear manner; the issue is purely in how the figures have been quoted by Tomas. Link to article: https://www.nejm.org/doi/full/10.1056/NEJMoa2002032 In his Medium article Tomas states that 2.5% of all cases require mechanical ventilation or some other form of intensive treatment, and that 5% of all cases require ICU admission. Based on the data he has presented, this should actually be scaled by the % of cases requiring hospitalisation (given by Tomas at 20%) – i.e. 0.5% of all cases not 2.5% of all cases for the intensive intervention, and 1% of all cases which require ICU admission not 5%. So Tomas is out by a factor of 5, just by correcting using his own estimates of the hospitalisation rate. Here is the relevant table from the study: Okay, so that first sentence has two mistakes already, what about that graphic which didn’t add up to 100%?

I went on the following journey to try to find the original study the data is taken from: Medium article -> Information is Beautiful (another website) which produced the infographic -> A Google Spreadsheet provided by Information is Beautiful which contains 3 links. The links were the following: 1) A Guardian article which is seemingly unrelated to this specific point?! https://www.theguardian.com/world/2020/mar/03/italy-elderly-population-coronavirus-risk-covid-19 2) A Statista article which repeats the same values: https://www.statista.com/chart/20856/coronavirus-case-severity-in-china/ 3) An article published by the Chinese Centre for Disease Control (CCDC): https://github.com/cmrivers/ncov/blob/master/COVID-19.pdf Ah ha, this article actually contains the research being quoted! It appears to be carried out by respectable scientists on real clinical data, okay, now we are getting somewhere. The article is an analysis of the characteristics of approx. 44k confirmed cases, and among other things, breaks out the percentage of these confirmed cases which required hospitalisation. We find out in this paper what happened to the missing 0.6% – in the original paper, these cases were marked up as ‘missing’, i.e. not reported. A fairly innocuous explanation, but one I would have preferred that to have been noted in the Medium graphic in some way – have an asterix and explain at the bottom, or allocate proportionate across the other categories, but do something about it! This is where I am going to strongly disagree with Tomas’s analysis. Hospitalisation numbers are very unlikely to be underreported – the CCDC article explains that all hospitals were required by law to report any cases to the CCDC. The possibility of over-reporting was handled through the inclusion of citizen’s national identity numbers in the data gathered to prevent double counting. In other words, the absolute number of hospitalisations and deaths quoted in the study are probably pretty accurate, the number of confirmed cases are also probably pretty accurate. We have to be careful though when applying this denominator to the population as a whole, using the number of confirmed cases as a proxy for the actual number of cases is very prone to underreporting, I would consider this value (approx. 20% requiring hospitalisation) as simply an upper bound on the percentage of infections which require hospitalisation. The Medium article however is not careful about this, and references the CCDC study as if it is an estimate of what percentage of the total population would require hospitalisation if infected by the virus – very different things! Without answering the question of what ratio of confirmed cases to actual infections we are dealing with in the underlying Chinese data, we are not making a valid inference about how these stats will apply to the total population. Note that the word 'case' in the way used by Tomas might actually be valid, here is the CDC definition: CASE. In epidemiology, a countable instance in the population or study group of a particular disease, health disorder, or condition under investigation. Sometimes, an individual with the particular disease. So in terms of the study, the hospitalisation rate to Cases (where Case has a capital C) could correctly be said to be 20%. I suspect that Tomas has not understood this subtlety, and if he has then he has presented the data in a very misleading way. Moreover when talking about the % of future cases that will lead to hospitalisation, this would require adjustment. This subtlety is never explained. The UK Government has stated they believe the proportion of actual infections to reported cases may be out by a factor 5-10. If we use the value of 5 to be on the more conservative side of their range, then we need to scale down all of Tomas’s numbers as follows:

Note that the ICU and ventilation numbers are now lower than the estimated fatality rate from Coronavirus! The UK Government has stated that their estimate is approximately 1%. So even though we’ve now made these numbers internally consistent, they are now too low to be believable.... Why is this? The first study which Tomas linked to (the table I’ve pasted above) is actually very immature, of the 1,099 patients in the study, 94% were still in the hospital at the end of the study! i.e. could very easily get much worse, therefore the 2.5% and 5% are actually underestimated! Once again we’ve scratched under the surface and found another glaring error. Nowhere in his study did Tomas mention that the 2.5% requiring ventilation and the 5% requiring ICU admission were based on just 25 and 50 people respectively and that he had not adjusted for the bias from right censoring (a study ends before a final value is known): en.wikipedia.org/wiki/Censoring_(statistics) So what's my point? As numerically literate people who are non-experts in infectious disease and epidemiology (and I consider myself in this category by the way, I am very ignorant of almost all epidemiology) we have to be soooo careful when producing analysis on Coronavirus which is getting disseminated to the wider public. It’s more important than ever to properly scrutinise sources, to stop and think if the person you are listening to truly knows what they are doing, and has taken the time to be careful in their analysis. I feel like there was a breakdown in reporting at multiple stages here. Tomas misinterpreted various otherwise well written and rigorous studies, the 200+ ‘scientists’ then appear to have swallowed this uncritically and referenced it as evidence which was cited to the UK Government, and the BBC has then quoted these scientists sending up their warning cry without really scrutinising the expertise of the scientists or the sources referenced by the scientists. [1] Open letter to the UK Government: http://maths.qmul.ac.uk/~vnicosia/UK_scientists_statement_on_coronavirus_measures.pdf [2] Tomas Pueyo's Medium article: https://medium.com/@tomaspueyo/coronavirus-act-today-or-people-will-die-f4d3d9cd99ca |

AuthorI work as an actuary and underwriter at a global reinsurer in London. Categories

All

Archives

April 2024

|

RSS Feed

RSS Feed

Leave a Reply.